The window between a vulnerability being disclosed and a working exploit appearing in the wild is shrinking fast. zerodayclock.com tracks this in real time. The trend line is going one direction: down.

Every hour your team spends chasing a false positive is an hour not spent patching something that could actually be exploited.

The system we built to surface vulnerabilities in open source is flooding both sides of the equation with noise. Maintainers drown in alerts they can't act on. Consumers drown in findings they can't prioritize.

A tax paid on both sides

If you maintain a popular open source project, you already know. You open GitHub and see 50+ Dependabot alerts. Are they exploitable? Almost certainly not. But proving that takes work.

You can't ignore them either. Users see those alerts when they install your package. They file issues. They open PRs bumping dependencies you never directly use. Some of those bumps introduce breaking changes. Now you're debugging compatibility regressions caused by a CVE that was never exploitable in the first place.

Analyzing each alert to prove it's not exploitable is a full-time job. Even if you do the work, there's no standard way to communicate the result. No machine-readable format that says "yes, we know about CVE-2026-XXXXX, no, it doesn't affect you, here's why." So you close the alert with a comment. The next user opens the same issue. The cycle repeats.

If you consume open source (meaning: every software team), the pain is different. You pull in a dependency. Your scanner lights up. You escalate to the maintainer. They're a two-person team maintaining this project on evenings and weekends. They don't have time to investigate your specific alert. You're stuck. Trust them and accept the risk on paper. Or spend your own engineering time proving it's not exploitable.

Multiply that across every OSS dependency in your stack. The average application has hundreds. Your security team doesn't have bandwidth to analyze each one. Your engineering team doesn't want to touch dependencies they didn't choose. The scanner doesn't care. It keeps generating findings.

Most of the time the alert sits in a backlog. Nobody triages it. Nobody closes it. It becomes background noise.

CVSS scores tell you severity in a vacuum. They describe what could happen if all the preconditions align. They don't tell you whether a vulnerability is actually exploitable in context. That gap between theoretical severity and actual exploitability is where all the wasted time lives.

Metabase: 53 vulnerabilities, zero exploitable

We wanted to see how bad this actually is on a real project.

We forked the latest version of Metabase, a popular open source analytics platform with 40k+ GitHub stars. Enabled Dependabot. It found 53 vulnerabilities. 26 were rated high severity.

We ran exploitability analysis on all 53. For each finding, we checked whether the vulnerable code is actually reachable, whether the exploit preconditions are satisfied, and whether attacker-controlled data can reach the vulnerable function in the way the exploit requires.

| Metric | Count |

|---|---|

| Vulnerabilities reported | 53 |

| High severity | 26 |

| False positives | 53 (100%) |

None of them survived exploitability analysis. The vulnerable code was either unreachable, blocked by how the application is configured, or missing the attacker-controlled inputs the exploit requires.

For the Metabase maintainers, that's 53 alerts that look urgent, require investigation, generate user complaints, and ultimately waste time.

This is one project, one scan. Here are 2 examples from the high-severity findings.

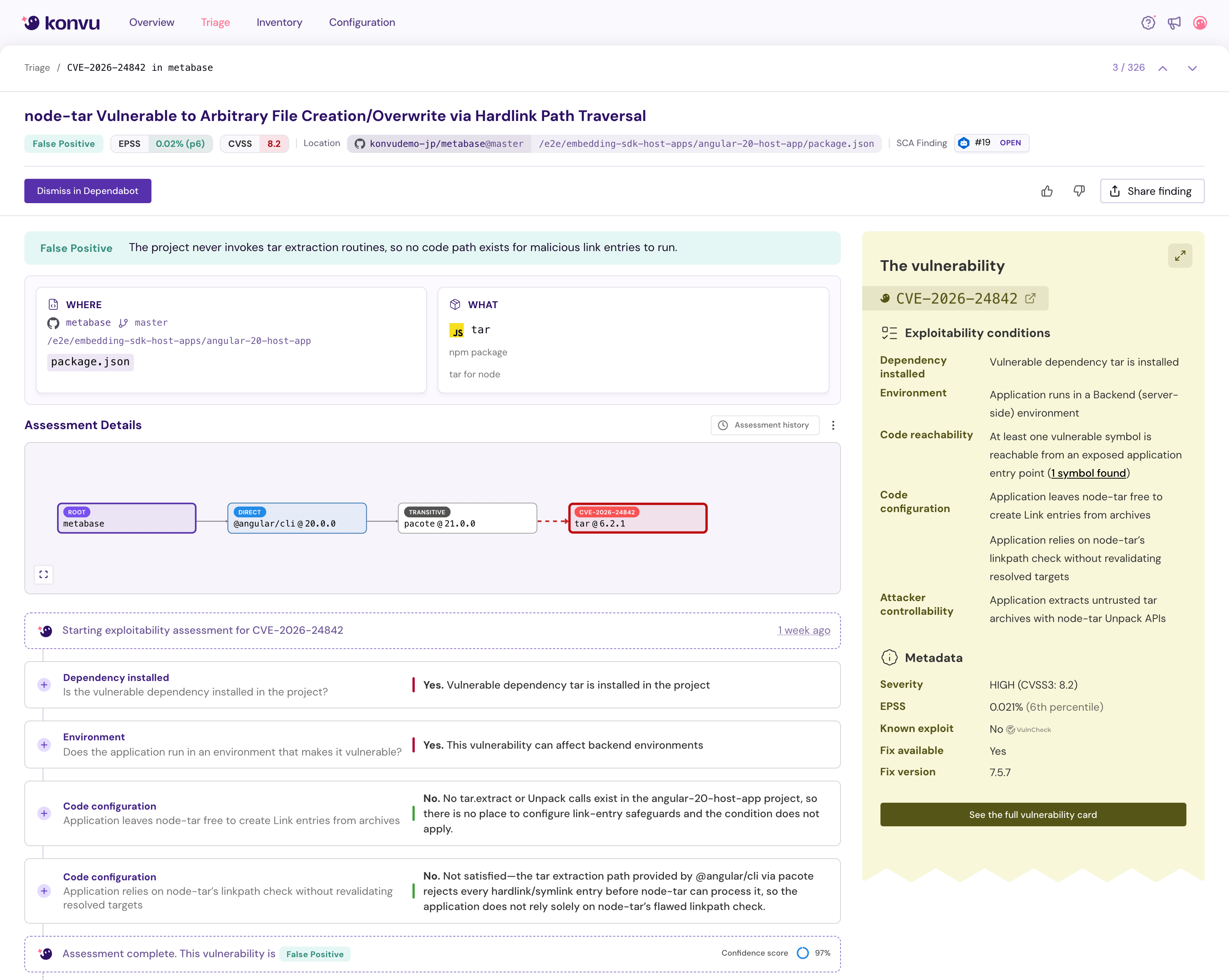

Example 1: CVE-2026-24842, node-tar link traversal

The alert: CVSS 8.2, rated High. node-tar is vulnerable to malicious link entries that can escape the extraction target directory.

The dependency chain: metabase -> @angular/cli -> pacote -> tar. Located in /e2e/embedding-sdk-host-apps/angular-20-host-app/package.json. Four levels deep.

Konvu ran exploitability analysis against this finding. Here's what it checked.

Is the vulnerable dependency installed? Yes. tar is present in the dependency tree.

Does the vulnerability apply to this environment? Yes. This is a backend/Node.js context where the vulnerability can have effect.

Does the application call tar.extract or Unpack? No. No calls to tar.extract() or the Unpack class exist anywhere in the angular-20-host-app project. The vulnerable code paths are never invoked.

Does the application rely on node-tar's linkpath check without revalidating? Not satisfied. The tar extraction path provided by @angular/cli via pacote rejects every hardlink and symlink entry before node-tar can process them. The application does not rely solely on node-tar's flawed linkpath check.

Verdict: False positive, 97% confidence. The project never invokes tar extraction routines, so no code path exists for malicious link entries to execute.

Every step in this analysis is documented and auditable. "It's not exploitable, trust us" doesn't fly in a security context. Teams need to see the work.

This analysis took seconds with tooling. Without it, a maintainer traces the dependency chain, reads the CVE advisory, greps for usage patterns, understands how pacote wraps tar, verifies that no extraction calls exist. That's 30 to 60 minutes of focused work. For one vulnerability. Multiply by 53.

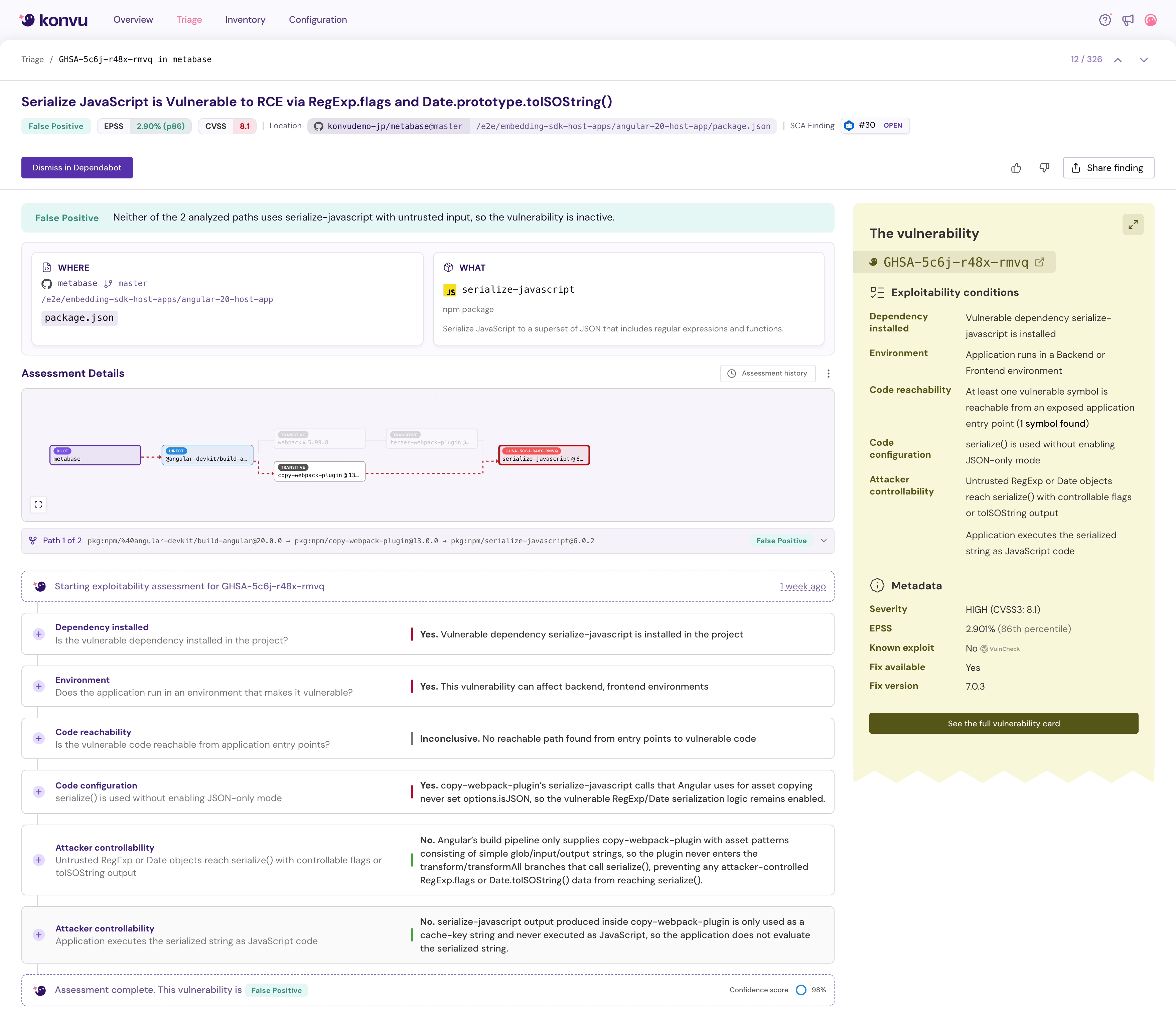

Example 2: GHSA-5c6j-r48x-rmvq, serialize-javascript RCE

The alert: CVSS 8.1, rated High. EPSS 86th percentile. serialize-javascript is vulnerable to remote code execution via crafted RegExp.flags and Date.prototype.toISOString(). "Remote Code Execution" right in the title.

The dependency chain: metabase -> @angular-devkit/build-angular -> copy-webpack-plugin -> serialize-javascript. Also reachable through webpack -> terser-webpack-plugin. Multiple paths to the same vulnerable package.

Konvu ran the same structured analysis.

Is the vulnerable dependency installed? Yes. serialize-javascript is present in the dependency tree.

Does the vulnerability apply to this environment? Yes. Applies to both backend and frontend environments.

Is there a reachable path from entry points to the vulnerable code? Inconclusive. No reachable path was found from application entry points to the vulnerable serialize() function.

Is serialize() used without enabling JSON-only mode? Yes. The vulnerable RegExp and Date serialization logic remains enabled. The safe configuration is not active.

Can an attacker control data reaching serialize()? No. copy-webpack-plugin only supplies simple glob, input, and output strings as asset patterns. The plugin never enters the transform or transformAll branches that call serialize(). Attacker-controlled RegExp.flags or Date.toISOString() data cannot reach the vulnerable function.

Is the serialize output executed as JavaScript? No. The output from serialize-javascript inside copy-webpack-plugin is only used as a cache-key string. It is never evaluated or executed as JavaScript.

Verdict: False positive, 98% confidence. The vulnerable function is present and technically not in safe mode. But no attacker-controlled data can reach it, and its output is never executed.

CVSS 8.1 with "Remote Code Execution" in the title. Without context, this is a fire drill. With exploitability analysis, it's a documented non-issue.

This is getting worse

On the attack side, AI is accelerating vulnerability discovery at a pace that wasn't possible a year ago. Anthropic just announced Project Glasswing, a defensive initiative deploying a frontier model that autonomously found thousands of zero-day vulnerabilities in major operating systems and browsers. Including a 27-year-old flaw in OpenBSD that survived decades of human review.

The model surpasses all but the most skilled humans at finding and exploiting software vulnerabilities. That capability is being used defensively today. It won't stay defensive forever. When AI can find vulnerabilities faster than humans can triage them, the false positive tax becomes even more dangerous.

On the defense side, maintainers are already underwater. They're expected to triage more findings, faster, with the same resources. A two-person team maintaining a library used by thousands of companies cannot analyze every CVE that touches their dependency tree. They can't fix everything either. Some fixes require breaking changes. Some need upstream patches in dependencies they don't control. Some are theoretical issues that have never been exploited in the wild and probably never will be.

The math doesn't work. More vulnerabilities reported. Same number of maintainers. Shrinking exploit timelines. Something has to give, and right now what's giving is accuracy. Teams are either ignoring alerts wholesale or burning cycles on findings that don't matter.

What needs to change

Stop treating every CVE as a fire drill. CVSS alone is not enough. A score tells you severity in isolation. It does not tell you whether the vulnerability is exploitable in your application, with your configuration, through your dependency chain. Context-aware exploitability analysis needs to become the default first step after detection.

Give maintainers a way to communicate exploitability. VEX (Vulnerability Exploitability eXchange) exists, but adoption is early. Maintainers need lightweight tooling to produce structured, auditable, machine-readable evidence that says "this CVE does not affect our project, and here is why." Not a GitHub comment. Not "won't fix" with no explanation. A document that downstream consumers and their scanners can ingest automatically.

Accept that not every vulnerability needs a patch. "Not exploitable in this context" is a valid, documented outcome. It requires evidence, not hand-waving. But the binary of "patch it or ignore it" doesn't scale when your project has hundreds of transitive dependencies and a new advisory drops every week. Sometimes the right answer is a documented verdict, not a code change. The security industry needs to get comfortable with that.

Detection is solved. The gap is what happens after. With exploit timelines shrinking and vulnerability volume increasing, the tax on open source is only going up. The ecosystem needs better answers than a severity score and a Dependabot alert.